AI & Product Data

Advancing Visual Information Retrieval: vviinn at VISAPP 2026

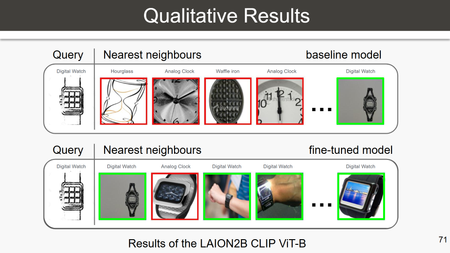

On March 10 at VISAPP 2026, vviinn’s Head of Research, Prof. Kai Uwe Barthel, explored the science behind intelligent visual discovery and why real-world commerce demands more than simply plugging in the latest AI model.

Hannah Schall

Share the article

Related posts

AI & Product Data

Robotics, Retail, and the Rise of Agentic Commerce